Chain store companies are increasing their use of predictive analytics in many areas including market optimization, sales forecasting, direct marketing, and localization of product offerings.

The value of simulating decision outcomes before investing financial and human resources is compelling. Therefore, much attention has been focused on algorithms and user interfaces that make it possible for analysts and executives to compare alternatives using “what if” scenarios.

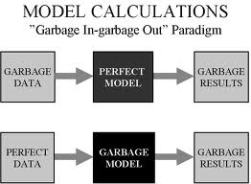

So here’s a big fat “WHAT IF”: what if the data that are used in the models are not accurate? What if the location of your existing stores or competitors don’t reflect “ground truth?” The answer is, you will get maps, reports, and sales forecasts that appear REALISTIC but they are not REAL.

After years of resistance, chain store executives are becoming eager to use more “science” in the planning and evaluation of store locations. What does “science” mean in the context of a complex system like the retail marketplace? It’s certainly not a set of well-defined cause and effect relationships that can be predicted with precision, such as the movement of the planets.

A better description would be “fact-based” decision-making, which means capturing relevant and accurate data about the marketplace and inferring conditions that are favorable for the operation of chain stores. These facts include trade area demographics, proximity of sister stores and competitors, and the quality of the site. It is certainly possible to create mathematical models that simulate the interaction of these factors in order to forecast sales, but the ultimate decision to approve an investment requires the experience and judgment of experts who use modeled estimates with other sources of facts and opinions.

Regardless of the specific approach to making decisions, if the “facts” are not accurate, mistakes can happen. Models will generate inaccurate estimates, and experts could be misled by maps or reports that have existing stores or competitors in the wrong locations.

It’s not easy to capture and validate accurate location information, especially when the database covers a large geographic area such as the entire US. When you are designing decision support systems that rely upon these data, invest in content and processes that will make your software and models give you reliable answers.

Here are some of the keys to good data quality:

- Research the sources of content including demographic data and business locations and compile a list that compares the quality and price so that you can find the right combination for your needs. Some data sources are VERY expensive and not a lot better than some that are more affordable. Others are VERY cheap, but you get what you pay for.

- Design a process for getting your staff to update and correct business locations when they find differences between maps or reports and what’s in the real world. Sometimes it’s necessary to designate a “chief editor” for changes to make sure that locations are not duplicated or changed incorrectly (e.g. new longitude coordinate is missing the negative sign).

- Select a software platform that allows you to make the changes yourself rather than relying upon a vendor to change them. Ideally you should be able to have changes synchronized across all platforms and devices, whether desktop, web browser, or mobile (eg smartphone or iPad).

- When you are getting ready to spend a lot of time on a market plan or site evaluation, spend extra time validating the locations (existing stores, traffic generators and competitors) in that area. It’s not practical to try to validate the entire US at once, with the exception of your own store locations.

Your models, maps, and reports will only be as good as your data. Before you spend $100,000 or more on a system to support your real estate planning and site selection, make sure that you are powering it with good fuel!

Your models, maps, and reports will only be as good as your data. Before you spend $100,000 or more on a system to support your real estate planning and site selection, make sure that you are powering it with good fuel!